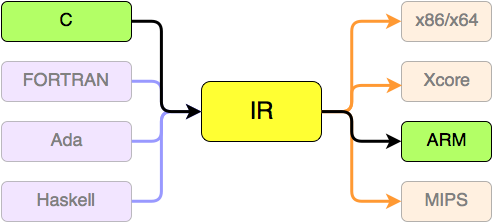

If you have programmed in C for any length of time, you already know that your C code gets compiled down to assembly, then to machine code for whatever platform you are building for. What you may not have realized is that this lets you experiment with assembly by writing and half-compiling C. If you can get the compiler to stop right after it has written the assembly code, you can get in and see how it thought C constructs should be represented at the lowest level. Luckily, GCC's -S option does just that.

This is a very powerful learning tool if used properly. It can lead to a confusing mess if used improperly. Let's lay down some ground rules:

- Know your compiler

- Use simple (read: small) C programs only

- Avoid the standard C library

- Know your operating systems ABI

Know Your Compiler

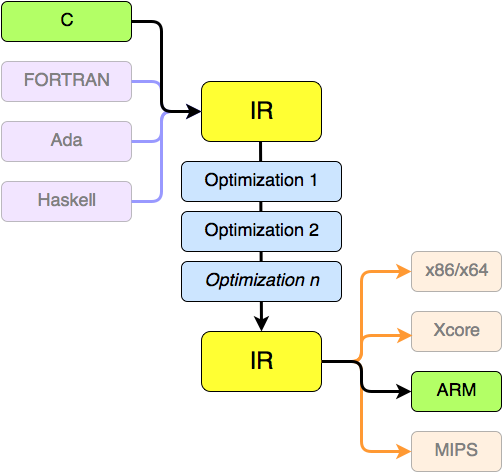

GCC doesn't like you. Sure, it will compile your programs for you, and it rarely complains unless you do it wrong, but first and foremost, GCC is not out to please you; the job of the compiler is to produce machine code, not human-readable assembly. Its second priority is to produce the fastest, most efficient and most secure machine code possible.

Unfortunately, fast, efficient and secure code is not always the most comprehensible. Luckily for GCC, no human ever reads the code it generates. GCC can get away with all kinds of crazy optimizations because 99.9% of the world cares how well the resulting binary runs, not how clean its machine code is.

To illustrate this, let's write the same program, once in C, which we will compile down to assembly, and once in Intel assembly.

First, the assembly version:

$ cat simple-asm.s

.text

main:

ret

$ gcc simple-asm.s -o simple-asm

Here is the C version:

$ cat simple-c.c

int main(int argc, char **argv, char *env)

{

return 0;

}

$ gcc simple-c.c -o simple-c

Run both of these programs and verify that they do, well, nothing. The $? shell variable should also be 0 after each binary runs.

Now it's time for some real fun. Let's see how GCC thinks we should write our assembly code to properly implement the C code from simple-c.c.

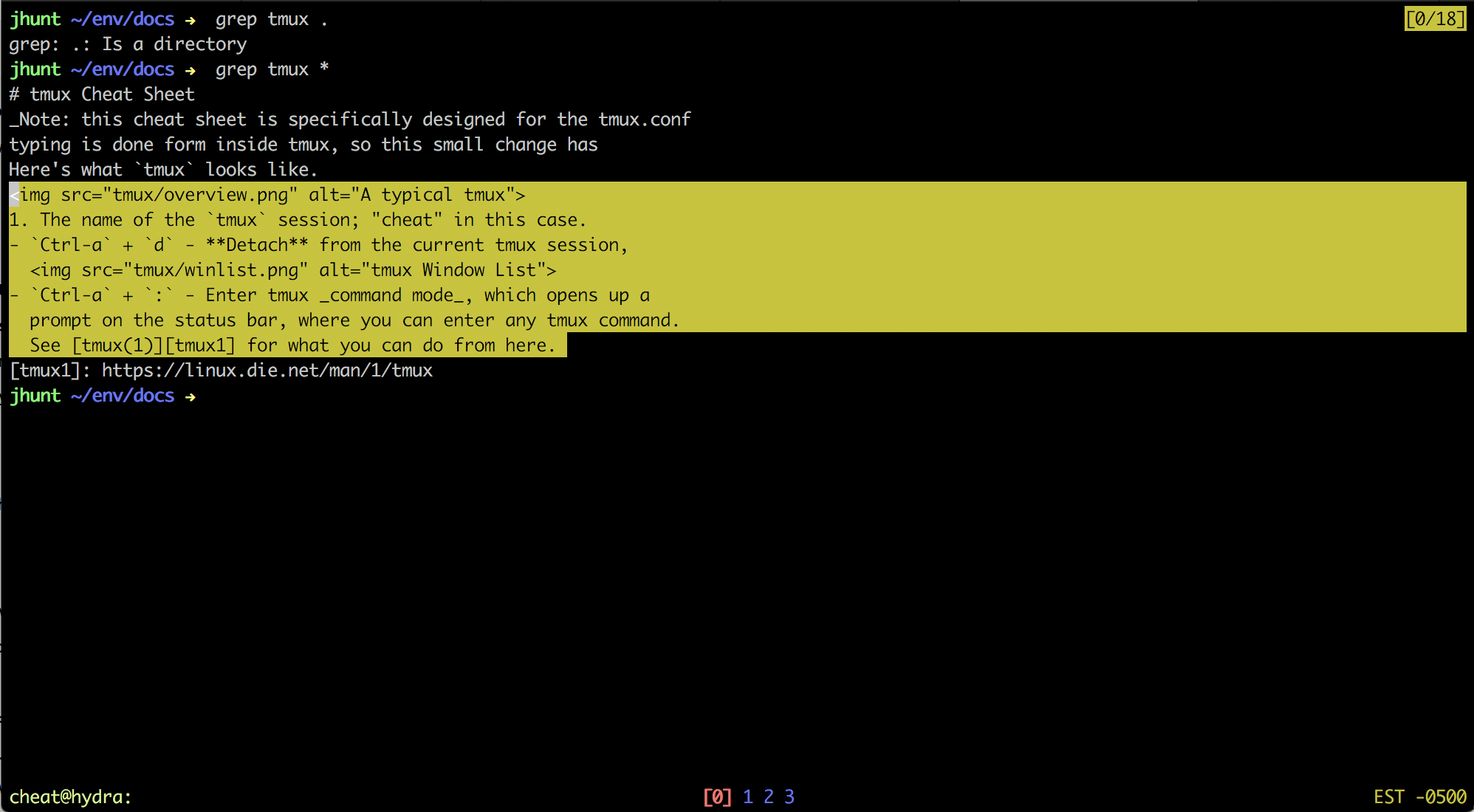

$ gcc -S simple-c.c -o simple-c.s

The simple-c.s file will contain the generated assembly we are looking for. Here's what I got:

$ cat simple-c.s

.file "simple-c.c"

.text

.globl main

.type main, @function

main:

.LFB0:

.cfi_startproc

pushq %rbp

.cfi_def_cfa_offset 16

.cfi_offset 6, -16

movq %rsp, %rbp

.cfi_def_cfa_register 6

movl %edi, -4(%rbp)

movq %rsi, -16(%rbp)

movq %rdx, -24(%rbp)

movl $0, %eax

popq %rbp

.cfi_def_cfa 7, 8

ret

.cfi_endproc

.LFE0:

.size main, .-main

.ident "GCC: (Ubuntu/Linaro 4.6.1-9ubuntu3) 4.6.1"

.section .note.GNU-stack,"",@progbits

(due to differences in platforms, architectures and GCC versions, you may get the same thing, or you may not. YMMV)

That's a lot of more code. Some of it comes from GCC optimizations, some if it comes from the actual C code we wrote, and some of its is just GCC being GCC.

The .file directive tells the loader what C source file this assembly was originally generated from. It can be removed (at the cost of debugging ability).

The .globl main statement identifies the main: label as a global symbol, that the loader should export. Since we are using GCC to assemble down to machine code, we need to keep this. Otherwise, we'll get loader errors about undefined references to 'main' in the _start symbol. The .type directive, on the other hand, can be removed safely.

The lines that start with cfi_ are Call Frame Information directives, and can safely be removed, although I have yet to find a GCC option that will do so.

Everything after .LFE0: can also safely be ignored; these are directives that as and ld use for their own purposes. For example, the .ident directive identifies that I used GCC v4.6.1 on an Ubuntu Linux box to generate the assembly code.

To prove that these parts of the assembly are non-critical, let's remove them, and try to build again:

$ cat bare.s

.text

.globl main

main:

pushq %rbp

movq %rsp, %rbp

movl %edi, -4(%rbp)

movq %rsi, -16(%rbp)

movq %rdx, -24(%rbp)

movl $0, %eax

popq %rbp

ret

$ gcc -o bare bare.s

Understanding the Generated Assembly

With all that extra cruft out of the way, we can get back to the task at hand: seeing how GCC translated our simple C program into assembly. Let's step through the code, op-code by op-code, to see what is going on.

pushq %rbp

Push the value of the RBP (frame pointer) register onto the stack. Since GCC sets up our _start harness for us, we need to save the memory address that jumped to us, so we can jump back to it when we are done. Later, we will see a popq instruction that re-sets the frame pointer. (Note: I did all of this on an x86_64-linux, so pointers are 64-bit values, hence the q in pushq)

movq %rsp, %rbp

Copy the 64-bit value from the RSP (stack pointer) register into the frame pointer register. Keep in mind that GCC defaults to AT&T assembly syntax, in which the operands are specified source,destination.

movl %edi, -4(%rbp)

movq %rsi, -16(%rbp)

movq %rdx, -24(%rbp)

Copy values from general purpose registers to the stack. EDI houses the 32-bit int argc argument to main, RSI contains the 64-bit pointer char argv, and RDX contains the 64-bit pointer char environ.

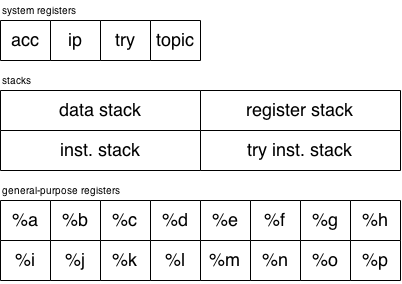

Always remember what ABI you are compiling against. Since my work was done on x86_64-linux, the binary call interface states that register arguments go in %rdi, %rsi, %rdx, %rcx, %r8, and %r8. This is different than the ABI for 32-bit platforms!

movl $0, %eax

Copy the literal value 0 into the 32-bit EAX register; this is the return value of main. Later, we'll modify the C program to prove this out.

popq %rbp

Reset the frame pointer so we can jump back to the _start harness.

ret

Return back to the calling code.

That's a lot of instructions for a decidedly uninteresting program, which leads me to my next point...

Use Simple C Programs

Even without all of the code that GCC adds, the code generated from our C file did a lot more than our hand-written assembly. To see why, let's try try re-writing the program to ignore the things we don't need, regenerate the assembly and see what changed.

Signature of main

Every C programmer has practically memorized the signature for the main function: int main (int argc, char argv, char environ). That may be the correct way to define main, but it is not the required way. Since we don't actually use argc, argv or environ in our program, let's try removing them from the signature:

int main(void) {

return 0;

}

Translating that into assembly, and removing the GCC extensions, we get the following assembly:

.text

.globl main

main:

pushq %rbp

movq %rsp, %rbp

movl $0, %eax

popq %rbp

ret

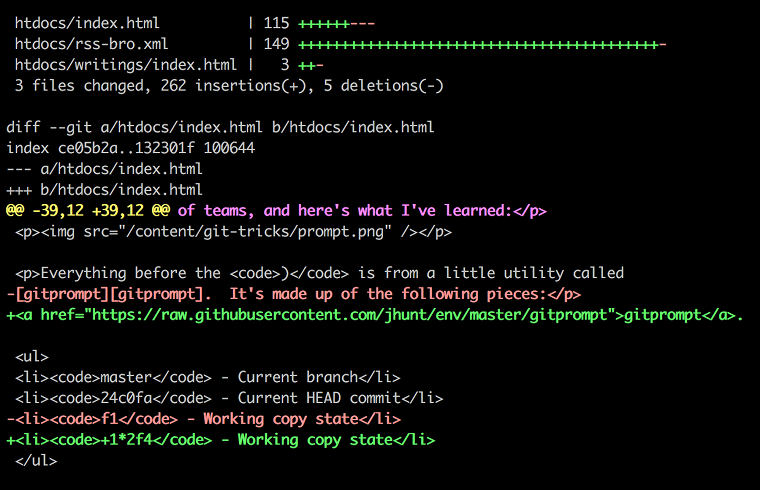

Better. Without the parameter list in our definition of main, GCC omitted the assembly that interacted with the stack to pull off argument values. In addition, we can start to see what bits of assembly are used to do what. Here's a diff of bare.s and no-sig.s:

--- bare.s 2012-07-09 12:47:55.054788188 -0400

+++ no-sig.s 2012-07-09 12:47:26.530787076 -0400

@@ -3,9 +3,6 @@

main:

pushq %rbp

movq %rsp, %rbp

- movl %edi, -4(%rbp)

- movq %rsi, -16(%rbp)

- movq %rdx, -24(%rbp)

movl $0, %eax

popq %rbp

ret

The only thing that was removed from the assembly by not specifying the parameter list was the code that dealt with the stack. We still have the pushq/popq instructions that deal with the frame pointer register, and the movl instruction for our return value.

What happens if we remove the return statement?

void main(void)

{

// how very zen...

}

Still valid C. Let's take a look at the assembly:

.text

.globl main

main:

pushq %rbp

movq %rsp, %rbp

popq %rbp

ret

Success! The movl statement that put the return value of 0 in EAX is gone! Now that we have baseline C code that will produce minimal assembly, let's get creative.

syscalls - Lifeblood of the Operating System

Operating Systems and user-land programs need to way to interact. On most OSes, including UNIX and Linux, this is done through system calls, which transfer control to the kernel for a well-defined operation. Linux system calls are documented in section 2 of the man pages. We'll start our real journey into C-assisted assembly with the write system call.

write, unsurprisingly, writes data to a file descriptor. It's time for that old favorite, the Hello, World application!

void main(void)

{

write(1, "Hello, World!\n", 14)

/* 14 = strlen("Hello, World!\n") + 1 (for '\0') */

}

Compile that to a binary and make sure it works. Go ahead, I'll wait.

Working? Great. Let's examine the assembly.

.section .rodata

.LC0:

.string "Hello, World!\n"

.text

.globl main

main:

# standard frame pointer management

pushq %rbp

movq %rsp, %rbp

movl $14, %edx # 3rd arg to write -- number of bytes

movl $.LC0, %esi # 2nd arg to write -- buffer to write

movl $1, %edi # 1st arg to write -- output file descriptor

movl $0, %eax

call write

# standard frame pointer management

popq %rbp

ret

(I reformatted the assembly code a bit, introducing newlines to aid legibility)

This new program actually introduces two concepts: constant data and system calls. As such, we get a new section, called .rodata which stores our statically initialized "Hello, World!n" string. GCC adds a .LC0 label, which will be used later to refer to the memory location where our string sits.

Lines 11, 12 and 13 prime the general purpose registers with the arguments to our write call; EDI contains the file descriptor 1 (standard output), ESI is the memory address of our string, and EDX houses the third argument, the number of bytes that should be output (the constant 14).

Even though GCC put the register moves in reverse order, you don't have to. We are dealing with discrete destinations, and not a stack where order would matter.

Line 14 sets the EAX return register to 0, and Line 15 issues the system call. The rest of the assembly is the boilerplate stuff we are used to seeing (frame pointer reset and return control).

Avoid the Standard C Library

What heresy is this? Avoid the C library? But it contains so much useful functionality that we don't want to have to write in assembly!

If you are actually trying to write 100% assembly programs, by all means, use the libc. However, for the purpose of learning assembly from idiomatic C, you're better off not using handy functions like printf and instead using system calls like write.

Why?

The generated code for system calls is much easier to understand, and since we are only interested in the mechanics of assembly, that's a huge win. To illustrate, try compiling this small snippet to assembly:

void main(void)

{

printf("Hello, World!\n")

}

You'll probably get something like this (cruft removed):

.section .rodata

.LC0:

.string "libc calling conventions"

.text

.globl main

main:

pushq %rbp

movq %rsp, %rbp

movl $.LC0, %edi

call puts

popq %rbp

ret

Looks easy enough. In fact, it's less code than the write system call example. But what if we print more than just a single literal string?

#include <stdio.h>;

void main(void)

{

printf("%s, %d, %d, %d, %d, %d\n",

"1-5 = ", 1, 2, 3, 4, 5);

}

And here's the assembly:

.section .rodata

.LC0:

.string "%s, %d, %d, %d, %d, %d\n"

.LC1:

.string "1-5 = "

.text

.globl main

main:

pushq %rbp

movq %rsp, %rbp

subq $16, %rsp

movl $.LC0, %eax

movl $5, (%rsp)

movl $4, %r9d

movl $3, %r8d

movl $2, %ecx

movl $1, %edx

movl $.LC1, %esi

movq %rax, %rdi

movl $0, %eax

call printf

leave

ret

First of all, we ran out of general purpose registers and had to move to the stack (thus the movl $5, (%rsp) instruction). Secondly, this version calls printf but the last version caused puts. What gives?

The C library is a lot like GCC: they both exist to produce fast machine code, not predictable, clear machine code. The printf function is actually a complicated C macro that does all kinds of cool optimizations deep down in the heart of the C library.

I'm not saying that you should never use standard library functions. There is a time and a place for playing with libc to see how the assembly under the hood works. That being said, I strongly recommend that you save that type of exploration for when you are proficient enough in reading (and writing) assembly.

Know Your ABI

ABI stands for Application Binary Interface, and just like an API (Application Programming Interface) it specifies the exact mechanics of interfacing with another component, but at the machine code level.

The C Standard Library, for example, defines an API for dealing with system calls like creat. If you look up the man page for creat (man 2 creat) the calling convention is documented right at the top:

NAME

open, creat - open and possibly create a file or device

SYNOPSIS

#include <sys/types.h>

#include <sys/stat.h>

#include <fcntl.h>

int open(const char *pathname, int flags);

int open(const char *pathname, int flags, mode_t mode);

int creat(const char *pathname, mode_t mode);

If you want to use the creat call from C, you call it with the absolute path name of the file (as a null-terminated character string), and a mode parameter. It will return to you an integer, the semantics of which are defined further on in the manual.

Similarly, bits of machine code (including your program and the operating system) needs to agree on how they will communicate using machine registers, memory and the stack. This is the ABI.

Because different machine architectures have different memory models, hardware registers and bus architectures, they also have different ABIs. This is why a binary executable compiled for Linux on the Intel 32-bit x86 platform won't work on the Intel 64-bit x86_64 platform, even though they may share instruction sets.

Normally, application programmers don't care about the ABI; it's something the compiler graciously takes care of. But in the world of assembly, you are the compiler, so you have to know these things.

The examples in this article (unless otherwise marked) are written for the Intel x86_64-linux ABI. There are notable differences between 32-bit and 64-bit Linux from the ABI standpoint, the most prominent of which is register usage.

On x86_32-linux, all arguments to functions are passed on the stack, without exception, in-order. On x86_64-linux, the stack is only used for parameters that are too big to pass in registers, and for parameters beyond the 6th. Registers for x86_64 calls are, in order, DI, SI, DX, CX, 8D, and 9D. If you are looking at assembly that does lots of pushl instructions (but never a pushq) before calling out to another function, it is most likely x86_32.

Onward, and Upward

Now you should have the context and tools to assist you in learning assembly by writing C. I will be publishing more articles on understanding calling conventions, structures, loops and more, so stay tuned!

]]>

]]>

]]>